MACHINE LEARNING MAKES WIDEX HEARING AIDS SMARTER

They constantly work to perfect every hearing event by combining data from the user’s own real-time input with that of every other Widex user. It’s a paradigm shift that evolves the concept of hearing.

Widex Moment Sheer, MOMENT, and EVOKE

These lines of hearing aids have a unique and innovative feature that is a true first in the industry.

Widex calls it machine learning. Using an app on your smartphone, you can now teach the hearing system how you want to hear. In practice, the app will generate alternative combinations of sound settings to try. These sound settings are generated based on the desired listening environment and a hearing goal. Simply by telling the app if you like these proposed sound changes, it will teach the system how to adjust the hearing aids. This works very similarly to your optometrist asking, “better or worse” during your eye exam. This data is used over time to help the hearing aids learn which combination of settings you prefer in different types of situations. You are a unique individual with unique preferences. Having your hearing aids be able to take that into consideration is very powerful.

SO SIMPLE

ANYONE CAN USE IT

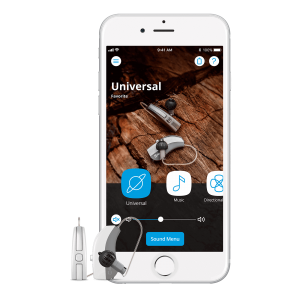

Once you’ve been fitted for your hearing aids, the wearer can use the Widex MOMENT or EVOKE app to take personal control of your hearing.

All you have to do is listen to two sound profiles and point to which one you prefer. No need to learn complex controls. And the more you use the app, the more your Widex Moment Sheer, MOMENT, or EVOKE hearing aid learns about how they want to hear.

Your hearing aids never stop evolving.

MACHINE LEARNING

HELPS HEARING

Every time someone uses the Widex interactive, machine learning-based SoundSense Technology, we learn more about how users prefer to hear in millions of different listening situations.

Widex engineers use this data to create more precise algorithms for firmware updates so that these hearing aids continuously benefit their users in real time. And the hearing aid you’re wearing today evolves to become even better tomorrow.

EVOLVES IN

REAL-WORLD CONDITIONS

The Widex MOMENT and EVOKE are the world’s first hearing aids to use machine learning. Every day it helps every user experience sound. Plus, they learn from the listening preferences of users all over the world – moving the evolution toward better hearing from the lab and the clinic into the real world.

ONCE TOUCHED,

ALWAYS REMEMBERED

The Widex Moment Sheer, MOMENT, and EVOKE hearing aids feature a one-touch control that remembers the user’s preferred settings across multiple parameters – for every sound environment. This means that you immediately experience optimal hearing, even when visiting an entirely new location.

A recent article published at hearinghealthmatters.org examines the differences between AI and machine learning in hearing aids and the implications this might have. It also looks at recent survey results for the machine learning-based SoundSense Learn feature, which is available in the WIDEX EVOKE, Moment Sheer, and MOMENT hearing aids.

The AI movement was created out of an attempt to see if a computer could convince a human that they were speaking with another human instead of a computer – that’s how the Turing Test was developed in 1950. Since that time, machine scientists have been in pursuit to see if they could create a computer that could function or mimic human cognitive processes, and that’s how AI and machine learning have become important technologies today.

What’s the difference between AI and machine learning?

AI is an attempt to have a computer perform a task that humans normally do, like drive a car, speak, and recognize faces. These are examples of what we consider learned tasks. Machine learning, on the other hand, focuses on continued learning and ongoing problem-solving capabilities that go beyond human capabilities – instead of just mimicking them.

What do AI and machine learning do for hearing aids?

With AI you can, for instance, adjust one hearing aid, and automatically the other side follows the adjustment. You could also use a sound classifier to initiate changes to features and gain in specific environments – that’s another example of AI in hearing aids.

Machine learning takes it a step further by capturing learning based on a user’s interaction, intention, and preference on how they like to hear in any specific environment. These intentions and preferences can only come from connecting machine learning to humans.

Hearing aids can only use what they can capture. The system’s ability to adjust to the environment and what’s happening in the acoustic landscape can be impaired if the hearing aid can only capture limited information.

Widex recently launched the SoundSense Learn technology. What Does It Do??

SoundSense Learn takes a well-researched machine learning approach for individual adjustments of the hearing aid. It compares complex combinations of settings by collecting user input through a simplified interface so that it’s easy for the user to manage.

If we have three acoustic parameters: low, mid, and high frequencies, and they can each be set to 13 different levels… that totals 2,197 combinations of the three settings. To sample and compare all these combinations to find the optimal listening settings would result in over two million comparisons.

No person can manage to review that kind of number to find their optimal setting. But SoundSense Learn can reach the optimal outcome in 20 interactive steps with a human – or less.

The listener gets immediate gratification without changing any of the programs that the hearing healthcare professional has done. But the listener has the power to refine and adjust their acoustics settings to meet their specific listening intention in real time – that’s right here, right now. That’s an example of a symbiotic collaboration that goes beyond what a human can achieve alone.

How does this kind of machine learning benefit the hearing aid user?

It allows a hearing aid user to customize and personalize a soundscape environment to their preference and intention. Previously, a hearing aid user would have to try and remember all of the details of a difficult listening situation, so they could explain it to their hearing healthcare professional at a later point. That’s really hard to do.

Collecting anonymous data from the SoundSense Learn and understanding how it’s used.

One of the areas to really highlight is where users created a program to repeat the use of their preferred settings – namely in the workplace. Widex engineers saw 141 programs that were created and reused by users in their workplace, and they were all very different.

A couple of years ago, a study from MarkeTrak told us that 83% of hearing aid users had reported being satisfied with their hearing aids in their workplace. That still leaves more than 15% who are either not satisfied with the sound in their workplace or would like it to be more customized. SoundSense Learn is a powerful and effective way for them to achieve that goal.

Examples of machine learning or AI in the hearing aid industry.

Currently, Widex is the only manufacturer that uses real-time machine learning. Other hearing aid manufacturers are using things like proximity sensors for fall detection and the Internet of Things, but Widex’s concern is that none of these features are focusing on sound quality, client preference, or the listening intentions of the client.

In the future, real-life applications of machine learning and AI go beyond what a human can achieve alone.

We are on the precipice of integrating advanced technology that has never been seen before into the hearing industry. We can only imagine what the future holds… but we will leave that up to the incredible engineers in the hearing aid industry.

For more information on Widex MOMENT or EVOKE hearing aids that utilize Machine Learning, please visit the Widex Hearing Aids Page on this website.

The use of the Widex logo or name and other relevant educational materials on this website is purely for informational purposes about the products we offer for sale.